Before we start

Overview

Teaching: 30 min

Exercises: 0 minQuestions

What is Python and why should I learn it?

Objectives

Present motivations for using Python.

Organize files and directories for a set of analyses as a Python project, and understand the purpose of the working directory.

How to work with Jupyter Notebook and Spyder.

Know where to find help.

Demonstrate how to provide sufficient information for troubleshooting with the Python user community.

What is Python?

Python is a general purpose programming language that supports rapid development of data analytics applications. The word “Python” is used to refer to both, the programming language and the tool that executes the scripts written in Python language.

Its main advantages are:

- Free

- Open-source

- Available on all major platforms (macOS, Linux, Windows)

- Supported by Python Software Foundation

- Supports multiple programming paradigms

- Has large community

- Rich ecosystem of third-party packages

So, why do you need Python for data analysis?

-

Easy to learn: Python is easier to learn than other programming languages. This is important because lower barriers mean it is easier for new members of the community to get up to speed.

-

Reproducibility: Reproducibility is the ability to obtain the same results using the same dataset(s) and analysis.

Data analysis written as a Python script can be reproduced on any platform. Moreover, if you collect more or correct existing data, you can quickly re-run your analysis!

An increasing number of journals and funding agencies expect analyses to be reproducible, so knowing Python will give you an edge with these requirements.

-

Versatility: Python is a versatile language that integrates with many existing applications to enable something completely amazing. For example, one can use Python to generate manuscripts, so that if you need to update your data, analysis procedure, or change something else, you can quickly regenerate all the figures and your manuscript will be updated automatically.

Python can read text files, connect to databases, and many other data formats, on your computer or on the web.

-

Interdisciplinary and extensible: Python provides a framework that allows anyone to combine approaches from different research (but not only) disciplines to best suit your analysis needs.

-

Python has a large and welcoming community: Thousands of people use Python daily. Many of them are willing to help you through mailing lists and websites, such as Stack Overflow and Anaconda community portal.

-

Free and Open-Source Software (FOSS)… and Cross-Platform: We know we have already said that but it is worth repeating.

Knowing your way around Anaconda

Anaconda distribution of Python includes a lot of its popular packages, such as the IPython console, Jupyter Notebook, and Spyder IDE. Have a quick look around the Anaconda Navigator. You can launch programs from the Navigator or use the command line.

The Jupyter Notebook is an open-source web application that allows you to create and share documents that allow one to create documents that combine code, graphs, and narrative text. Spyder is an Integrated Development Environment that allows one to write Python scripts and interact with the Python software from within a single interface.

Anaconda also comes with a package manager called conda, which makes it easy to install and update additional packages.

Research Project: Best Practices

It is a good idea to keep a set of related data, analyses, and text in a single folder. All scripts and text files within this folder can then use relative paths to the data files. Working this way makes it a lot easier to move around your project and share it with others.

Organizing your working directory

Using a consistent folder structure across your projects will help you keep things organized, and will also make it easy to find/file things in the future. This can be especially helpful when you have multiple projects. In general, you may wish to create separate directories for your scripts, data, and documents.

-

data/: Use this folder to store your raw data. For the sake of transparency and provenance, you should always keep a copy of your raw data. If you need to cleanup data, do it programmatically (i.e. with scripts) and make sure to separate cleaned up data from the raw data. For example, you can store raw data in files./data/raw/and clean data in./data/clean/. -

documents/: Use this folder to store outlines, drafts, and other text. -

scripts/: Use this folder to store your (Python) scripts for data cleaning, analysis, and plotting that you use in this particular project.

You may need to create additional directories depending on your project needs, but these should form

the backbone of your project’s directory. For this workshop, we will need a data/ folder to store

our raw data, and we will later create a data_output/ folder when we learn how to export data as

CSV files.

What is Programming and Coding?

Programming is the process of writing “programs” that a computer can execute and produce some (useful) output. Programming is a multi-step process that involves the following steps:

- Identifying the aspects of the real-world problem that can be solved computationally

- Identifying (the best) computational solution

- Implementing the solution in a specific computer language

- Testing, validating, and adjusting implemented solution.

While “Programming” refers to all of the above steps, “Coding” refers to step 3 only: “Implementing the solution in a specific computer language”. It’s important to note that “the best” computational solution must consider factors beyond the computer. Who is using the program, what resources/funds does your team have for this project, and the available timeline all shape and mold what “best” may be.

If you are working with Jupyter notebook:

You can type Python code into a code cell and then execute the code by pressing

Shift+Return.

Output will be printed directly under the input cell.

You can recognise a code cell by the In[ ]: at the beginning of the cell and output by Out[ ]:.

Pressing the + button in the menu bar will add a new cell.

All your commands as well as any output will be saved with the notebook.

If you are working with Spyder:

You can either use the console or use script files (plain text files that contain your code). The console pane (in Spyder, the bottom right panel) is the place where commands written in the Python language can be typed and executed immediately by the computer. It is also where the results will be shown. You can execute commands directly in the console by pressing Return, but they will be “lost” when you close the session. Spyder uses the IPython console by default.

Since we want our code and workflow to be reproducible, it is better to type the commands in the script editor, and save them as a script. This way, there is a complete record of what we did, and anyone (including our future selves!) has an easier time reproducing the results on their computer.

Spyder allows you to execute commands directly from the script editor by using the run buttons on top. To run the entire script click Run file or press F5, to run the current line click Run selection or current line or press F9, other run buttons allow to run script cells or go into debug mode. When using F9, the command on the current line in the script (indicated by the cursor) or all of the commands in the currently selected text will be sent to the console and executed.

At some point in your analysis you may want to check the content of a variable or the structure of an object, without necessarily keeping a record of it in your script. You can type these commands and execute them directly in the console. Spyder provides the Ctrl+Shift+E and Ctrl+Shift+I shortcuts to allow you to jump between the script and the console panes.

If Python is ready to accept commands, the IPython console shows an In [..]: prompt with the

current console line number in []. If it receives a command (by typing, copy-pasting or sent from

the script editor), Python will execute it, display the results in the Out [..]: cell, and come

back with a new In [..]: prompt waiting for new commands.

If Python is still waiting for you to enter more data because it isn’t complete yet, the console

will show a ...: prompt. It means that you haven’t finished entering a complete command. This can

be because you have not typed a closing parenthesis (), ], or }) or quotation mark. When this

happens, and you thought you finished typing your command, click inside the console window and press

Esc; this will cancel the incomplete command and return you to the In [..]: prompt.

How to learn more after the workshop?

The material we cover during this workshop will give you an initial taste of how you can use Python to analyze data for your own research. However, you will need to learn more to do advanced operations such as cleaning your dataset, using statistical methods, or creating beautiful graphics. The best way to become proficient and efficient at python, as with any other tool, is to use it to address your actual research questions. As a beginner, it can feel daunting to have to write a script from scratch, and given that many people make their code available online, modifying existing code to suit your purpose might make it easier for you to get started.

Seeking help

- check under the Help menu

- type

help() - type

?objectorhelp(object)to get information about an object - Python documentation

- Pandas documentation

Finally, a generic Google or internet search “Python task” will often either send you to the appropriate module documentation or a helpful forum where someone else has already asked your question.

I am stuck… I get an error message that I don’t understand. Start by googling the error message. However, this doesn’t always work very well, because often, package developers rely on the error catching provided by Python. You end up with general error messages that might not be very helpful to diagnose a problem (e.g. “subscript out of bounds”). If the message is very generic, you might also include the name of the function or package you’re using in your query.

However, you should check Stack Overflow. Search using the [python] tag. Most questions have already

been answered, but the challenge is to use the right words in the search to find the answers:

https://stackoverflow.com/questions/tagged/python?tab=Votes

Asking for help

The key to receiving help from someone is for them to rapidly grasp your problem. You should make it as easy as possible to pinpoint where the issue might be.

Try to use the correct words to describe your problem. For instance, a package is not the same thing as a library. Most people will understand what you meant, but others have really strong feelings about the difference in meaning. The key point is that it can make things confusing for people trying to help you. Be as precise as possible when describing your problem.

If possible, try to reduce what doesn’t work to a simple reproducible example. If you can reproduce the problem using a very small data frame instead of your 50,000 rows and 10,000 columns one, provide the small one with the description of your problem. When appropriate, try to generalize what you are doing so even people who are not in your field can understand the question. For instance, instead of using a subset of your real dataset, create a small (3 columns, 5 rows) generic one.

Where to ask for help?

- The person sitting next to you during the workshop. Don’t hesitate to talk to your neighbor during the workshop, compare your answers, and ask for help. You might also be interested in organizing regular meetings following the workshop to keep learning from each other.

- Your friendly colleagues: if you know someone with more experience than you, they might be able and willing to help you.

- Stack Overflow: if your question hasn’t been answered before and is well crafted, chances are you will get an answer in less than 5 min. Remember to follow their guidelines on how to ask a good question.

- Python mailing lists

More resources

Key Points

Python is an open source and platform independent programming language.

Jupyter Notebook and the Spyder IDE are great tools to code in and interact with Python. With the large Python community it is easy to find help on the internet.

Short Introduction to Programming in Python

Overview

Teaching: 30 min

Exercises: 0 minQuestions

What is Python?

Why should I learn Python?

Objectives

Describe the advantages of using programming vs. completing repetitive tasks by hand.

Define the following data types in Python: strings, integers, and floats.

Perform mathematical operations in Python using basic operators.

Define the following as it relates to Python: lists, tuples, and dictionaries.

Interpreter

Python is an interpreted language which can be used in two ways:

- “Interactively”: when you use it as an “advanced calculator” executing

one command at a time. To start Python in this mode, execute

pythonon the command line:

$ python

Python 3.5.1 (default, Oct 23 2015, 18:05:06)

[GCC 4.8.3] on linux2

Type "help", "copyright", "credits" or "license" for more information.

>>>

Chevrons >>> indicate an interactive prompt in Python, meaning that it is waiting for your

input.

2 + 2

4

print("Hello World")

Hello World

- “Scripting” Mode: executing a series of “commands” saved in text file,

usually with a

.pyextension after the name of your file:

$ python my_script.py

Hello World

Python Notebooks

During this workshop we’ll be using Google Colab. These are a Google implementation of Jupyter notebooks a web-based interactive development environment for Python (and other languages).

In the notebooks you have cells which can either be a code cell or a markdown cell.

- Code cells are just like the interactive python interpreter and allow you to write a block of code that you can then run all at once. You can run a cell by selecting it and pressing

Shift+Enter - Markdown cells are for including annotations using the Markdown syntax.

Within the notebook cells you primarily run python code, but it is also possible to run shell commands:

# in a colab notebook we start shell commands with an exclamation mark !

! ls

sample_data

Introduction to Python built-in data types

Strings, integers, and floats

One of the most basic things we can do in Python is assign values to variables:

text = "Data Carpentry" # An example of a string

number = 42 # An example of an integer

pi_value = 3.1415 # An example of a float

Here we’ve assigned data to the variables text, number and pi_value,

using the assignment operator =. To review the value of a variable, we

can type the name of the variable into the interpreter and press Return:

text

"Data Carpentry"

Everything in Python has a type. To get the type of something, we can pass it

to the built-in function type:

type(text)

<class 'str'>

type(number)

<class 'int'>

type(pi_value)

<class 'float'>

The variable text is of type str, short for “string”. Strings hold

sequences of characters, which can be letters, numbers, punctuation

or more exotic forms of text (even emoji!).

We can also see the value of something using another built-in function, print:

print(text)

Data Carpentry

print(number)

42

This may seem redundant, but in fact it’s the only way to display output in a script:

example.py

# A Python script file

# Comments in Python start with #

# The next line assigns the string "Data Carpentry" to the variable "text".

text = "Data Carpentry"

# The next line does nothing!

text

# The next line uses the print function to print out the value we assigned to "text"

print(text)

Running the script

$ python example.py

Data Carpentry

Notice that “Data Carpentry” is printed only once.

Tip: print and type are built-in functions in Python. Later in this

lesson, we will introduce methods and user-defined functions. The Python

documentation is excellent for reference on the differences between them.

Operators

We can perform mathematical calculations in Python using the basic operators

+, -, /, *, %:

2 + 2 # Addition

4

6 * 7 # Multiplication

42

2 ** 16 # Power

65536

13 % 5 # Modulo

3

We can also use comparison and logic operators:

<, >, ==, !=, <=, >= and statements of identity such as

and, or, not. The data type returned by this is

called a boolean.

3 > 4

False

True and True

True

True or False

True

True and False

False

Sequences: Lists and Tuples

Lists

Lists are a common data structure to hold an ordered sequence of elements. Each element can be accessed by an index. Note that Python indexes start with 0 instead of 1:

numbers = [1, 2, 3]

numbers[0]

1

A for loop can be used to access the elements in a list or other Python data

structure one at a time:

for num in numbers:

print(num)

1

2

3

Indentation is very important in Python. Note that the second line in the

example above is indented. Just like three chevrons >>> indicate an

interactive prompt in Python, the three dots ... are Python’s prompt for

multiple lines. This is Python’s way of marking a block of code. [Note: you

do not type >>> or ....]

To add elements to the end of a list, we can use the append method. Methods

are a way to interact with an object (a list, for example). We can invoke a

method using the dot . followed by the method name and a list of arguments

in parentheses. Let’s look at an example using append:

numbers.append(4)

print(numbers)

[1, 2, 3, 4]

To find out what methods are available for an

object, we can use the built-in help command:

help(numbers)

Help on list object:

class list(object)

| list() -> new empty list

| list(iterable) -> new list initialized from iterable's items

...

Tuples

A tuple is similar to a list in that it’s an ordered sequence of elements.

However, tuples can not be changed once created (they are “immutable”). Tuples

are created by placing comma-separated values inside parentheses ().

# Tuples use parentheses

a_tuple = (1, 2, 3)

another_tuple = ('blue', 'green', 'red')

# Note: lists use square brackets

a_list = [1, 2, 3]

Tuples vs. Lists

- What happens when you execute

a_list[1] = 5?- What happens when you execute

a_tuple[2] = 5?- What does

type(a_tuple)tell you abouta_tuple?

Dictionaries

A dictionary is a container that holds pairs of objects - keys and values.

translation = {'one': 'first', 'two': 'second'}

translation['one']

'first'

Dictionaries work a lot like lists - except that you index them with keys. You can think about a key as a name or unique identifier for the value it corresponds to.

rev = {'first': 'one', 'second': 'two'}

rev['first']

'one'

To add an item to the dictionary we assign a value to a new key:

rev = {'first': 'one', 'second': 'two'}

rev['third'] = 'three'

rev

{'first': 'one', 'second': 'two', 'third': 'three'}

Using for loops with dictionaries is a little more complicated. We can do

this in two ways:

for key, value in rev.items():

print(key, '->', value)

'first' -> one

'second' -> two

'third' -> three

or

for key in rev.keys():

print(key, '->', rev[key])

'first' -> one

'second' -> two

'third' -> three

Changing dictionaries

- First, print the value of the

revdictionary to the screen.- Reassign the value that corresponds to the key

secondso that it no longer reads “two” but instead2.- Print the value of

revto the screen again to see if the value has changed.

Functions

Defining a section of code as a function in Python is done using the def

keyword. For example a function that takes two arguments and returns their sum

can be defined as:

def add_function(a, b):

result = a + b

return result

z = add_function(20, 22)

print(z)

42

Key Points

Python is an interpreted language which can be used interactively (executing one command at a time) or in scripting mode (executing a series of commands saved in file).

One can assign a value to a variable in Python. Those variables can be of several types, such as string, integer, floating point and complex numbers.

Lists and tuples are similar in that they are ordered lists of elements; they differ in that a tuple is immutable (cannot be changed).

Dictionaries are data structures that provide mappings between keys and values.

Starting With Data

Overview

Teaching: 30 min

Exercises: 30 minQuestions

How can I import data in Python?

What is Pandas?

Why should I use Pandas to work with data?

Objectives

Navigate the workshop directory and download a dataset.

Explain what a library is and what libraries are used for.

Describe what the Python Data Analysis Library (Pandas) is.

Load the Python Data Analysis Library (Pandas).

Use

read_csvto read tabular data into Python.Describe what a DataFrame is in Python.

Access and summarize data stored in a DataFrame.

Define indexing as it relates to data structures.

Perform basic mathematical operations and summary statistics on data in a Pandas DataFrame.

Create simple plots.

Working With Pandas DataFrames in Python

We can automate the process of performing data manipulations in Python. It’s efficient to spend time building the code to perform these tasks because once it’s built, we can use it over and over on different datasets that use a similar format. This makes our methods easily reproducible. We can also easily share our code with colleagues and they can replicate the same analysis.

Starting in the same spot

To help the lesson run smoothly, let’s ensure everyone is in the same directory. This should help us avoid path and file name issues. At this time please navigate to the workshop directory. If you are working in IPython Notebook be sure that you start your notebook in the workshop directory.

A quick aside that there are Python libraries like OS Library that can work with our directory structure, however, that is not our focus today.

Our Data

For this lesson, we will be using the Portal Teaching data, a subset of the data from Ernst et al Long-term monitoring and experimental manipulation of a Chihuahuan Desert ecosystem near Portal, Arizona, USA.

We will be using files from the Portal Project Teaching Database.

This section will use the surveys.csv file that can be downloaded here:

https://ndownloader.figshare.com/files/2292172

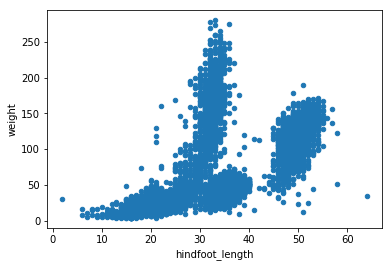

We are studying the species and weight of animals caught in sites in our study

area. The dataset is stored as a .csv file: each row holds information for a

single animal, and the columns represent:

| Column | Description |

|---|---|

| record_id | Unique id for the observation |

| month | month of observation |

| day | day of observation |

| year | year of observation |

| plot_id | ID of a particular site |

| species_id | 2-letter code |

| sex | sex of animal (“M”, “F”) |

| hindfoot_length | length of the hindfoot in mm |

| weight | weight of the animal in grams |

The first few rows of our first file look like this:

record_id,month,day,year,plot_id,species_id,sex,hindfoot_length,weight

1,7,16,1977,2,NL,M,32,

2,7,16,1977,3,NL,M,33,

3,7,16,1977,2,DM,F,37,

4,7,16,1977,7,DM,M,36,

5,7,16,1977,3,DM,M,35,

6,7,16,1977,1,PF,M,14,

7,7,16,1977,2,PE,F,,

8,7,16,1977,1,DM,M,37,

9,7,16,1977,1,DM,F,34,

About Libraries

A library in Python contains a set of tools (called functions) that perform tasks on our data. Importing a library is like getting a piece of lab equipment out of a storage locker and setting it up on the bench for use in a project. Once a library is set up, it can be used or called to perform the task(s) it was built to do.

Pandas in Python

One of the best options for working with tabular data in Python is to use the Python Data Analysis Library (a.k.a. Pandas). The Pandas library provides data structures, produces high quality plots with matplotlib and integrates nicely with other libraries that use NumPy (which is another Python library) arrays.

Python doesn’t load all of the libraries available to it by default. We have to

add an import statement to our code in order to use library functions. To import

a library, we use the syntax import libraryName. If we want to give the

library a nickname to shorten the command, we can add as nickNameHere. An

example of importing the pandas library using the common nickname pd is below.

import pandas as pd

Each time we call a function that’s in a library, we use the syntax

LibraryName.FunctionName. Adding the library name with a . before the

function name tells Python where to find the function. In the example above, we

have imported Pandas as pd. This means we don’t have to type out pandas each

time we call a Pandas function.

Reading CSV Data Using Pandas

We will begin by locating and reading our survey data which are in CSV format. CSV stands for

Comma-Separated Values and is a common way to store formatted data. Other symbols may also be used, so

you might see tab-separated, colon-separated or space separated files. It is quite easy to replace

one separator with another, to match your application. The first line in the file often has headers

to explain what is in each column. CSV (and other separators) make it easy to share data, and can be

imported and exported from many applications, including Microsoft Excel. For more details on CSV

files, see the Data Organisation in Spreadsheets lesson.

We can use Pandas’ read_csv function to pull the file directly into a DataFrame.

So What’s a DataFrame?

A DataFrame is a 2-dimensional data structure that can store data of different

types (including characters, integers, floating point values, factors and more)

in columns. It is similar to a spreadsheet or an SQL table or the data.frame in

R. A DataFrame always has an index (0-based). An index refers to the position of

an element in the data structure.

# Note that pd.read_csv is used because we imported pandas as pd

pd.read_csv("data/surveys.csv")

The above command yields the output below:

record_id month day year plot_id species_id sex hindfoot_length weight

0 1 7 16 1977 2 NL M 32 NaN

1 2 7 16 1977 3 NL M 33 NaN

2 3 7 16 1977 2 DM F 37 NaN

3 4 7 16 1977 7 DM M 36 NaN

4 5 7 16 1977 3 DM M 35 NaN

...

35544 35545 12 31 2002 15 AH NaN NaN NaN

35545 35546 12 31 2002 15 AH NaN NaN NaN

35546 35547 12 31 2002 10 RM F 15 14

35547 35548 12 31 2002 7 DO M 36 51

35548 35549 12 31 2002 5 NaN NaN NaN NaN

[35549 rows x 9 columns]

We can see that there were 35,549 rows parsed. Each row has 9

columns. The first column is the index of the DataFrame. The index is used to

identify the position of the data, but it is not an actual column of the DataFrame.

It looks like the read_csv function in Pandas read our file properly. However,

we haven’t saved any data to memory so we can work with it. We need to assign the

DataFrame to a variable. Remember that a variable is a name for a value, such as x,

or data. We can create a new object with a variable name by assigning a value to it using =.

Let’s call the imported survey data surveys_df:

surveys_df = pd.read_csv("data/surveys.csv")

Notice when you assign the imported DataFrame to a variable, Python does not

produce any output on the screen. We can view the value of the surveys_df

object by typing its name into the Python command prompt.

surveys_df

which prints contents like above.

Note: if the output is too wide to print on your narrow terminal window, you may see something slightly different as the large set of data scrolls past. You may see simply the last column of data:

17 NaN

18 NaN

19 NaN

20 NaN

21 NaN

22 NaN

23 NaN

24 NaN

25 NaN

26 NaN

27 NaN

28 NaN

29 NaN

... ...

35519 36.0

35520 48.0

35521 45.0

35522 44.0

35523 27.0

35524 26.0

35525 24.0

35526 43.0

35527 NaN

35528 25.0

35529 NaN

35530 NaN

35531 43.0

35532 48.0

35533 56.0

35534 53.0

35535 42.0

35536 46.0

35537 31.0

35538 68.0

35539 23.0

35540 31.0

35541 29.0

35542 34.0

35543 NaN

35544 NaN

35545 NaN

35546 14.0

35547 51.0

35548 NaN

[35549 rows x 9 columns]

Never fear, all the data is there, if you scroll up. Selecting just a few rows, so it is easier to fit on one window, you can see that pandas has neatly formatted the data to fit our screen:

surveys_df.head() # The head() method displays the first several lines of a file. It

# is discussed below.

record_id month day year plot_id species_id sex hindfoot_length \

5 6 7 16 1977 1 PF M 14.0

6 7 7 16 1977 2 PE F NaN

7 8 7 16 1977 1 DM M 37.0

8 9 7 16 1977 1 DM F 34.0

9 10 7 16 1977 6 PF F 20.0

weight

5 NaN

6 NaN

7 NaN

8 NaN

9 NaN

Exploring Our Species Survey Data

Again, we can use the type function to see what kind of thing surveys_df is:

type(surveys_df)

<class 'pandas.core.frame.DataFrame'>

As expected, it’s a DataFrame (or, to use the full name that Python uses to refer

to it internally, a pandas.core.frame.DataFrame).

What kind of things does surveys_df contain? DataFrames have an attribute

called dtypes that answers this:

surveys_df.dtypes

record_id int64

month int64

day int64

year int64

plot_id int64

species_id object

sex object

hindfoot_length float64

weight float64

dtype: object

All the values in a column have the same type. For example, months have type

int64, which is a kind of integer. Cells in the month column cannot have

fractional values, but the weight and hindfoot_length columns can, because they

have type float64. The object type doesn’t have a very helpful name, but in

this case it represents strings (such as ‘M’ and ‘F’ in the case of sex).

We’ll talk a bit more about what the different formats mean in a different lesson.

Useful Ways to View DataFrame objects in Python

There are many ways to summarize and access the data stored in DataFrames, using attributes and methods provided by the DataFrame object.

To access an attribute, use the DataFrame object name followed by the attribute

name df_object.attribute. Using the DataFrame surveys_df and attribute

columns, an index of all the column names in the DataFrame can be accessed

with surveys_df.columns.

Methods are called in a similar fashion using the syntax df_object.method().

As an example, surveys_df.head() gets the first few rows in the DataFrame

surveys_df using the head() method. With a method, we can supply extra

information in the parens to control behaviour.

Let’s look at the data using these.

Challenge - DataFrames

Using our DataFrame

surveys_df, try out the attributes & methods below to see what they return.

surveys_df.columns

surveys_df.shapeTake note of the output ofshape- what format does it return the shape of the DataFrame in?HINT: More on tuples, here.

surveys_df.head()Also, what doessurveys_df.head(15)do?surveys_df.tail()

Calculating Statistics From Data In A Pandas DataFrame

We’ve read our data into Python. Next, let’s perform some quick summary statistics to learn more about the data that we’re working with. We might want to know how many animals were collected in each site, or how many of each species were caught. We can perform summary stats quickly using groups. But first we need to figure out what we want to group by.

Let’s begin by exploring our data:

# Look at the column names

surveys_df.columns

which returns:

Index(['record_id', 'month', 'day', 'year', 'plot_id', 'species_id', 'sex',

'hindfoot_length', 'weight'],

dtype='object')

Let’s get a list of all the species. The pd.unique function tells us all of

the unique values in the species_id column.

pd.unique(surveys_df['species_id'])

which returns:

array(['NL', 'DM', 'PF', 'PE', 'DS', 'PP', 'SH', 'OT', 'DO', 'OX', 'SS',

'OL', 'RM', nan, 'SA', 'PM', 'AH', 'DX', 'AB', 'CB', 'CM', 'CQ',

'RF', 'PC', 'PG', 'PH', 'PU', 'CV', 'UR', 'UP', 'ZL', 'UL', 'CS',

'SC', 'BA', 'SF', 'RO', 'AS', 'SO', 'PI', 'ST', 'CU', 'SU', 'RX',

'PB', 'PL', 'PX', 'CT', 'US'], dtype=object)

Challenge - Statistics

Create a list of unique site ID’s (“plot_id”) found in the surveys data. Call it

site_names. How many unique sites are there in the data? How many unique species are in the data?What is the difference between

len(site_names)andsurveys_df['plot_id'].nunique()?

Groups in Pandas

We often want to calculate summary statistics grouped by subsets or attributes within fields of our data. For example, we might want to calculate the average weight of all individuals per site.

We can calculate basic statistics for all records in a single column using the syntax below:

surveys_df['weight'].describe()

gives output

count 32283.000000

mean 42.672428

std 36.631259

min 4.000000

25% 20.000000

50% 37.000000

75% 48.000000

max 280.000000

Name: weight, dtype: float64

We can also extract one specific metric if we wish:

surveys_df['weight'].min()

surveys_df['weight'].max()

surveys_df['weight'].mean()

surveys_df['weight'].std()

surveys_df['weight'].count()

But if we want to summarize by one or more variables, for example sex, we can

use Pandas’ .groupby method. Once we’ve created a groupby DataFrame, we

can quickly calculate summary statistics by a group of our choice.

# Group data by sex

grouped_data = surveys_df.groupby('sex')

The pandas function describe will return descriptive stats including: mean,

median, max, min, std and count for a particular column in the data. Pandas’

describe function will only return summary values for columns containing

numeric data.

# Summary statistics for all numeric columns by sex

grouped_data.describe()

# Provide the mean for each numeric column by sex

grouped_data.mean()

grouped_data.mean() OUTPUT:

record_id month day year plot_id \

sex

F 18036.412046 6.583047 16.007138 1990.644997 11.440854

M 17754.835601 6.392668 16.184286 1990.480401 11.098282

hindfoot_length weight

sex

F 28.836780 42.170555

M 29.709578 42.995379

The groupby command is powerful in that it allows us to quickly generate

summary stats.

Challenge - Summary Data

- How many recorded individuals are female

Fand how many maleM?- What happens when you group by two columns using the following syntax and then calculate mean values?

grouped_data2 = surveys_df.groupby(['plot_id', 'sex'])grouped_data2.mean()- Summarize weight values for each site in your data. HINT: you can use the following syntax to only create summary statistics for one column in your data.

by_site['weight'].describe()Did you get #3 right?

A Snippet of the Output from challenge 3 looks like:

site 1 count 1903.000000 mean 51.822911 std 38.176670 min 4.000000 25% 30.000000 50% 44.000000 75% 53.000000 max 231.000000 ...

Quickly Creating Summary Counts in Pandas

Let’s next count the number of samples for each species. We can do this in a few

ways, but we’ll use groupby combined with a count() method.

# Count the number of samples by species

species_counts = surveys_df.groupby('species_id')['record_id'].count()

print(species_counts)

Or, we can also count just the rows that have the species “DO”:

surveys_df.groupby('species_id')['record_id'].count()['DO']

Challenge - Make a list

What’s another way to create a list of species and associated

countof the records in the data? Hint: you can performcount,min, etc. functions on groupby DataFrames in the same way you can perform them on regular DataFrames.

Basic Math Functions

If we wanted to, we could perform math on an entire column of our data. For example let’s multiply all weight values by 2. A more practical use of this might be to normalize the data according to a mean, area, or some other value calculated from our data.

# Multiply all weight values by 2

surveys_df['weight']*2

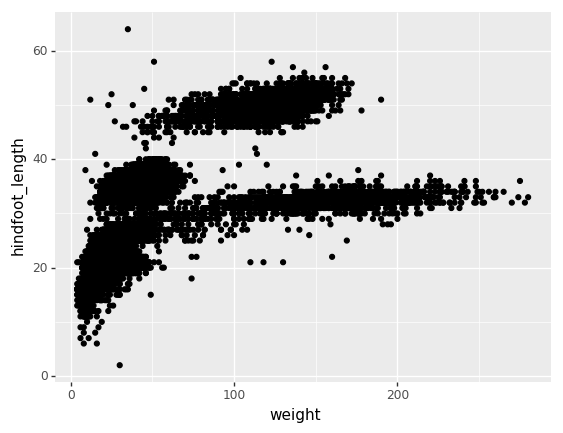

Quick & Easy Plotting Data Using Pandas

We can plot our summary stats using Pandas, too.

# Make sure figures appear inline in Ipython Notebook

%matplotlib inline

# Create a quick bar chart

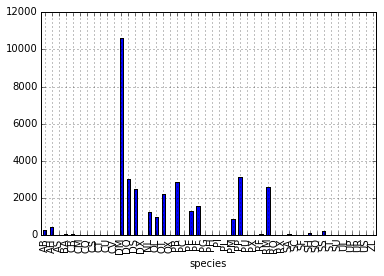

species_counts.plot(kind='bar');

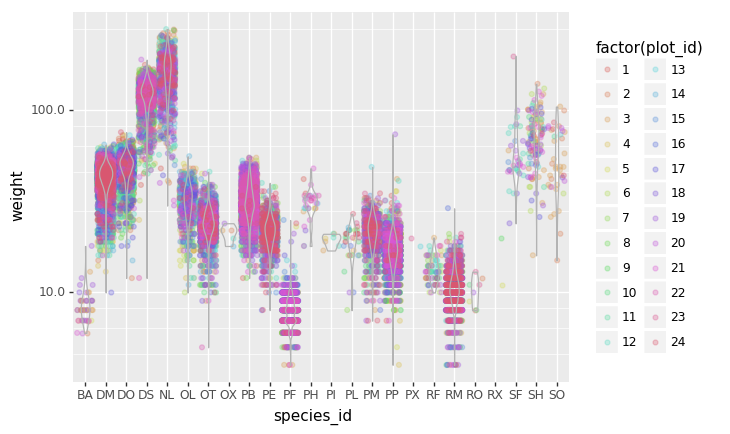

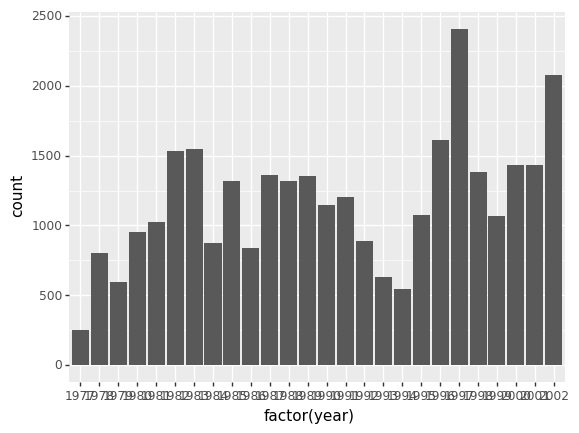

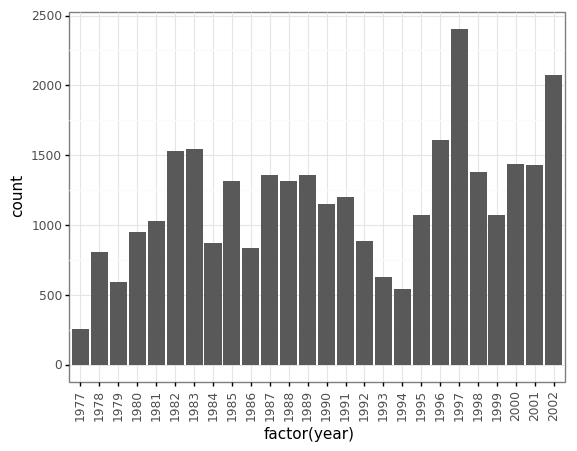

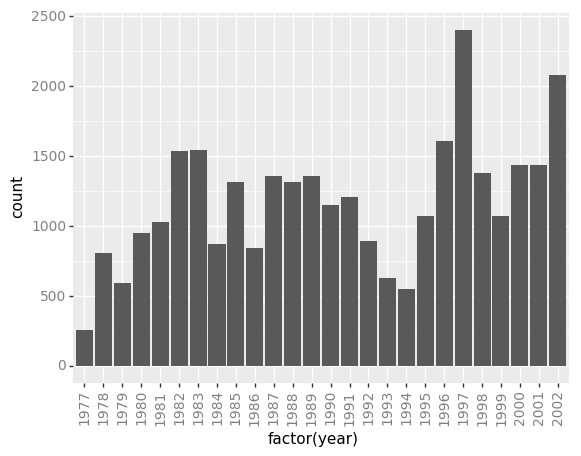

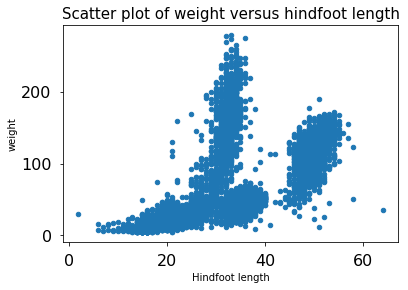

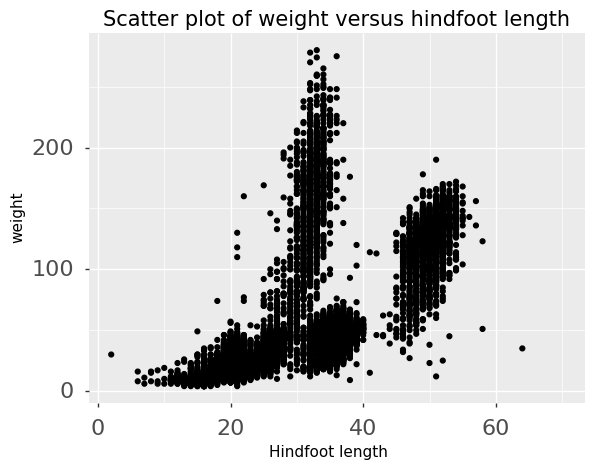

Count per species site

Count per species site

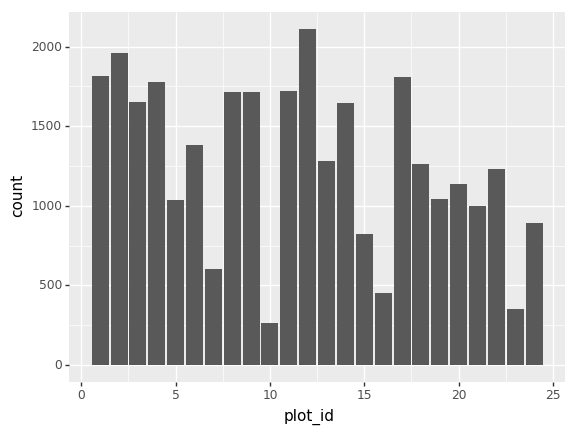

We can also look at how many animals were captured in each site:

total_count = surveys_df.groupby('plot_id')['record_id'].nunique()

# Let's plot that too

total_count.plot(kind='bar');

Challenge - Plots

- Create a plot of average weight across all species per site.

- Create a plot of total males versus total females for the entire dataset.

Summary Plotting Challenge

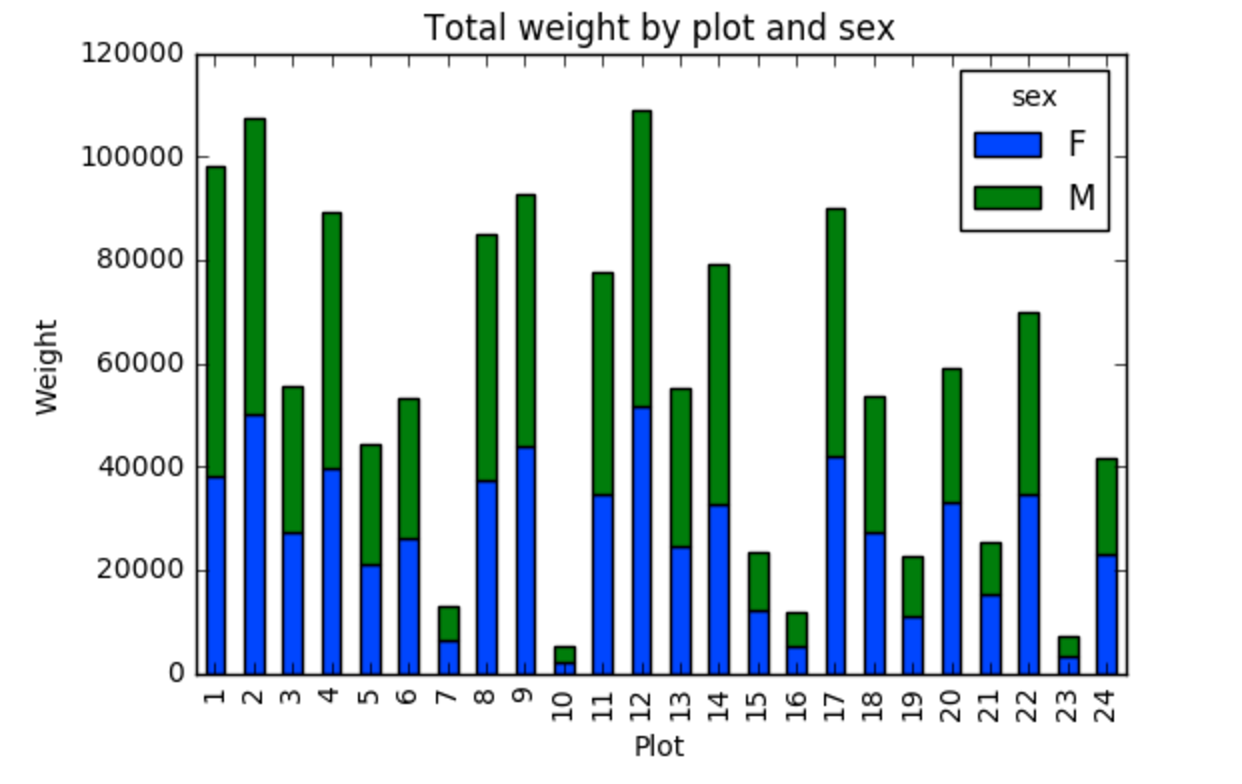

Create a stacked bar plot, with weight on the Y axis, and the stacked variable being sex. The plot should show total weight by sex for each site. Some tips are below to help you solve this challenge:

- For more information on pandas plots, see pandas’ documentation page on visualization.

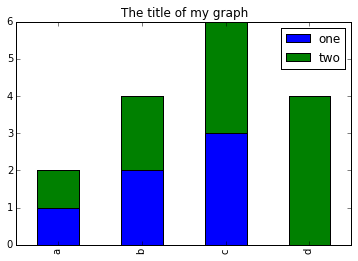

- You can use the code that follows to create a stacked bar plot but the data to stack need to be in individual columns. Here’s a simple example with some data where ‘a’, ‘b’, and ‘c’ are the groups, and ‘one’ and ‘two’ are the subgroups.

d = {'one' : pd.Series([1., 2., 3.], index=['a', 'b', 'c']), 'two' : pd.Series([1., 2., 3., 4.], index=['a', 'b', 'c', 'd'])} pd.DataFrame(d)shows the following data

one two a 1 1 b 2 2 c 3 3 d NaN 4We can plot the above with

# Plot stacked data so columns 'one' and 'two' are stacked my_df = pd.DataFrame(d) my_df.plot(kind='bar', stacked=True, title="The title of my graph")

- You can use the

.unstack()method to transform grouped data into columns for each plotting. Try running.unstack()on some DataFrames above and see what it yields.Start by transforming the grouped data (by site and sex) into an unstacked layout, then create a stacked plot.

Solution to Summary Challenge

First we group data by site and by sex, and then calculate a total for each site.

by_site_sex = surveys_df.groupby(['plot_id', 'sex']) site_sex_count = by_site_sex['weight'].sum()This calculates the sums of weights for each sex within each site as a table

site sex plot_id sex 1 F 38253 M 59979 2 F 50144 M 57250 3 F 27251 M 28253 4 F 39796 M 49377 <other sites removed for brevity>Below we’ll use

.unstack()on our grouped data to figure out the total weight that each sex contributed to each site.by_site_sex = surveys_df.groupby(['plot_id', 'sex']) site_sex_count = by_site_sex['weight'].sum() site_sex_count.unstack()The

unstackmethod above will display the following output:sex F M plot_id 1 38253 59979 2 50144 57250 3 27251 28253 4 39796 49377 <other sites removed for brevity>Now, create a stacked bar plot with that data where the weights for each sex are stacked by site.

Rather than display it as a table, we can plot the above data by stacking the values of each sex as follows:

by_site_sex = surveys_df.groupby(['plot_id', 'sex']) site_sex_count = by_site_sex['weight'].sum() spc = site_sex_count.unstack() s_plot = spc.plot(kind='bar', stacked=True, title="Total weight by site and sex") s_plot.set_ylabel("Weight") s_plot.set_xlabel("Plot")

Key Points

Libraries enable us to extend the functionality of Python.

Pandas is a popular library for working with data.

A Dataframe is a Pandas data structure that allows one to access data by column (name or index) or row.

Aggregating data using the

groupby()function enables you to generate useful summaries of data quickly.Plots can be created from DataFrames or subsets of data that have been generated with

groupby().

Indexing, Slicing and Subsetting DataFrames in Python

Overview

Teaching: 30 min

Exercises: 30 minQuestions

How can I access specific data within my data set?

How can Python and Pandas help me to analyse my data?

Objectives

Describe what 0-based indexing is.

Manipulate and extract data using column headings and index locations.

Employ slicing to select sets of data from a DataFrame.

Employ label and integer-based indexing to select ranges of data in a dataframe.

Reassign values within subsets of a DataFrame.

Create a copy of a DataFrame.

Query / select a subset of data using a set of criteria using the following operators:

==,!=,>,<,>=,<=.Locate subsets of data using masks.

Describe BOOLEAN objects in Python and manipulate data using BOOLEANs.

In the first episode of this lesson, we read a CSV file into a pandas’ DataFrame. We learned how to:

- save a DataFrame to a named object,

- perform basic math on data,

- calculate summary statistics, and

- create plots based on the data we loaded into pandas.

In this lesson, we will explore ways to access different parts of the data using:

- indexing,

- slicing, and

- subsetting.

Loading our data

We will continue to use the surveys dataset that we worked with in the last episode. Let’s reopen and read in the data again:

# Make sure pandas is loaded

import pandas as pd

# Read in the survey CSV

surveys_df = pd.read_csv("data/surveys.csv")

Indexing and Slicing in Python

We often want to work with subsets of a DataFrame object. There are different ways to accomplish this including: using labels (column headings), numeric ranges, or specific x,y index locations.

Selecting data using Labels (Column Headings)

We use square brackets [] to select a subset of a Python object. For example,

we can select all data from a column named species_id from the surveys_df

DataFrame by name. There are two ways to do this:

# TIP: use the .head() method we saw earlier to make output shorter

# Method 1: select a 'subset' of the data using the column name

surveys_df['species_id']

# Method 2: use the column name as an 'attribute'; gives the same output

surveys_df.species_id

We can also create a new object that contains only the data within the

species_id column as follows:

# Creates an object, surveys_species, that only contains the `species_id` column

surveys_species = surveys_df['species_id']

We can pass a list of column names too, as an index to select columns in that order. This is useful when we need to reorganize our data.

NOTE: If a column name is not contained in the DataFrame, an exception (error) will be raised.

# Select the species and plot columns from the DataFrame

surveys_df[['species_id', 'plot_id']]

# What happens when you flip the order?

surveys_df[['plot_id', 'species_id']]

# What happens if you ask for a column that doesn't exist?

surveys_df['speciess']

Python tells us what type of error it is in the traceback, at the bottom it says

KeyError: 'speciess' which means that speciess is not a valid column name (nor a valid key in

the related Python data type dictionary).

Reminder

The Python language and its modules (such as Pandas) define reserved words that should not be used as identifiers when assigning objects and variable names. Examples of reserved words in Python include Boolean values

TrueandFalse, operatorsand,or, andnot, among others. The full list of reserved words for Python version 3 is provided at https://docs.python.org/3/reference/lexical_analysis.html#identifiers.When naming objects and variables, it’s also important to avoid using the names of built-in data structures and methods. For example, a list is a built-in data type. It is possible to use the word ‘list’ as an identifier for a new object, for example

list = ['apples', 'oranges', 'bananas']. However, you would then be unable to create an empty list usinglist()or convert a tuple to a list usinglist(sometuple).

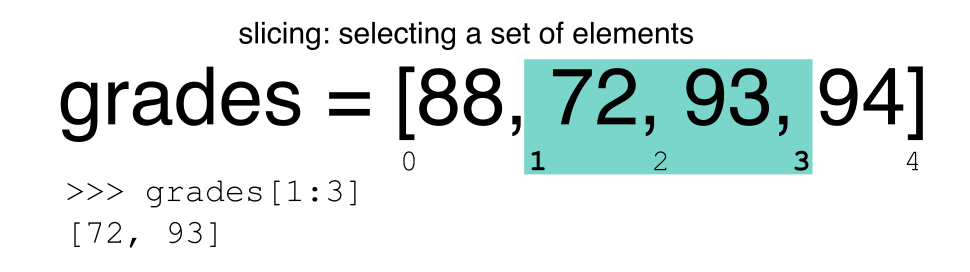

Extracting Range based Subsets: Slicing

Reminder

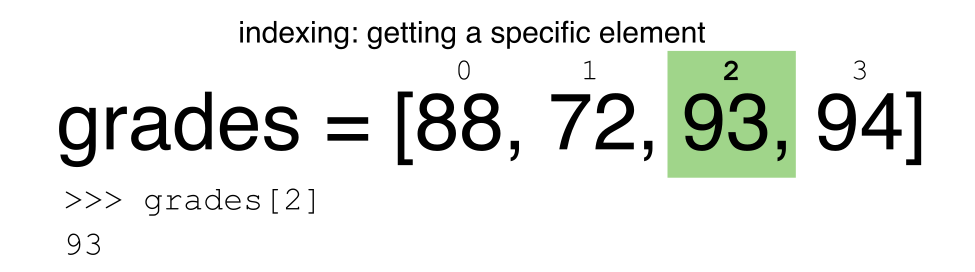

Python uses 0-based indexing.

Let’s remind ourselves that Python uses 0-based

indexing. This means that the first element in an object is located at position

0. This is different from other tools like R and Matlab that index elements

within objects starting at 1.

# Create a list of numbers:

a = [1, 2, 3, 4, 5]

Challenge - Extracting data

What value does the code below return?

a[0]How about this:

a[5]In the example above, calling

a[5]returns an error. Why is that?What about?

a[len(a)]

Slicing Subsets of Rows in Python

Slicing using the [] operator selects a set of rows and/or columns from a

DataFrame. To slice out a set of rows, you use the following syntax:

data[start:stop]. When slicing in pandas the start bound is included in the

output. The stop bound is one step BEYOND the row you want to select. So if you

want to select rows 0, 1 and 2 your code would look like this:

# Select rows 0, 1, 2 (row 3 is not selected)

surveys_df[0:3]

The stop bound in Python is different from what you might be used to in languages like Matlab and R.

# Select the first 5 rows (rows 0, 1, 2, 3, 4)

surveys_df[:5]

# Select the last element in the list

# (the slice starts at the last element, and ends at the end of the list)

surveys_df[-1:]

We can also reassign values within subsets of our DataFrame.

But before we do that, let’s look at the difference between the concept of copying objects and the concept of referencing objects in Python.

Copying Objects vs Referencing Objects in Python

Let’s start with an example:

# Using the 'copy() method'

true_copy_surveys_df = surveys_df.copy()

# Using the '=' operator

ref_surveys_df = surveys_df

You might think that the code ref_surveys_df = surveys_df creates a fresh

distinct copy of the surveys_df DataFrame object. However, using the =

operator in the simple statement y = x does not create a copy of our

DataFrame. Instead, y = x creates a new variable y that references the

same object that x refers to. To state this another way, there is only

one object (the DataFrame), and both x and y refer to it.

In contrast, the copy() method for a DataFrame creates a true copy of the

DataFrame.

Let’s look at what happens when we reassign the values within a subset of the DataFrame that references another DataFrame object:

# Assign the value `0` to the first three rows of data in the DataFrame

ref_surveys_df[0:3] = 0

Let’s try the following code:

# ref_surveys_df was created using the '=' operator

ref_surveys_df.head()

# surveys_df is the original dataframe

surveys_df.head()

What is the difference between these two dataframes?

When we assigned the first 3 columns the value of 0 using the

ref_surveys_df DataFrame, the surveys_df DataFrame is modified too.

Remember we created the reference ref_surveys_df object above when we did

ref_surveys_df = surveys_df. Remember surveys_df and ref_surveys_df

refer to the same exact DataFrame object. If either one changes the object,

the other will see the same changes to the reference object.

To review and recap:

-

Copy uses the dataframe’s

copy()methodtrue_copy_surveys_df = surveys_df.copy() -

A Reference is created using the

=operatorref_surveys_df = surveys_df

Okay, that’s enough of that. Let’s create a brand new clean dataframe from the original data CSV file.

surveys_df = pd.read_csv("data/surveys.csv")

Slicing Subsets of Rows and Columns in Python

We can select specific ranges of our data in both the row and column directions using either label or integer-based indexing.

locis primarily label based indexing. Integers may be used but they are interpreted as a label.ilocis primarily integer based indexing

To select a subset of rows and columns from our DataFrame, we can use the

iloc method. For example, we can select month, day and year (columns 2, 3

and 4 if we start counting at 1), like this:

# iloc[row slicing, column slicing]

surveys_df.iloc[0:3, 1:4]

which gives the output

month day year

0 7 16 1977

1 7 16 1977

2 7 16 1977

Notice that we asked for a slice from 0:3. This yielded 3 rows of data. When you ask for 0:3, you are actually telling Python to start at index 0 and select rows 0, 1, 2 up to but not including 3.

Let’s explore some other ways to index and select subsets of data:

# Select all columns for rows of index values 0 and 10

surveys_df.loc[[0, 10], :]

# What does this do?

surveys_df.loc[0, ['species_id', 'plot_id', 'weight']]

# What happens when you type the code below?

surveys_df.loc[[0, 10, 35549], :]

NOTE: Labels must be found in the DataFrame or you will get a KeyError.

Indexing by labels loc differs from indexing by integers iloc.

With loc, both the start bound and the stop bound are inclusive. When using

loc, integers can be used, but the integers refer to the

index label and not the position. For example, using loc and select 1:4

will get a different result than using iloc to select rows 1:4.

We can also select a specific data value using a row and

column location within the DataFrame and iloc indexing:

# Syntax for iloc indexing to finding a specific data element

dat.iloc[row, column]

In this iloc example,

surveys_df.iloc[2, 6]

gives the output

'F'

Remember that Python indexing begins at 0. So, the index location [2, 6] selects the element that is 3 rows down and 7 columns over in the DataFrame.

Challenge - Range

What happens when you execute:

surveys_df[0:1]surveys_df[:4]surveys_df[:-1]What happens when you call:

surveys_df.iloc[0:4, 1:4]surveys_df.loc[0:4, 1:4]

- How are the two commands different?

Subsetting Data using Criteria

We can also select a subset of our data using criteria. For example, we can select all rows that have a year value of 2002:

surveys_df[surveys_df.year == 2002]

Which produces the following output:

record_id month day year plot_id species_id sex hindfoot_length weight

33320 33321 1 12 2002 1 DM M 38 44

33321 33322 1 12 2002 1 DO M 37 58

33322 33323 1 12 2002 1 PB M 28 45

33323 33324 1 12 2002 1 AB NaN NaN NaN

33324 33325 1 12 2002 1 DO M 35 29

...

35544 35545 12 31 2002 15 AH NaN NaN NaN

35545 35546 12 31 2002 15 AH NaN NaN NaN

35546 35547 12 31 2002 10 RM F 15 14

35547 35548 12 31 2002 7 DO M 36 51

35548 35549 12 31 2002 5 NaN NaN NaN NaN

[2229 rows x 9 columns]

Or we can select all rows that do not contain the year 2002:

surveys_df[surveys_df.year != 2002]

We can define sets of criteria too:

surveys_df[(surveys_df.year >= 1980) & (surveys_df.year <= 1985)]

Python Syntax Cheat Sheet

We can use the syntax below when querying data by criteria from a DataFrame. Experiment with selecting various subsets of the “surveys” data.

- Equals:

== - Not equals:

!= - Greater than, less than:

>or< - Greater than or equal to

>= - Less than or equal to

<=

Challenge - Queries

Select a subset of rows in the

surveys_dfDataFrame that contain data from the year 1999 and that contain weight values less than or equal to 8. How many rows did you end up with? What did your neighbor get?You can use the

isincommand in Python to query a DataFrame based upon a list of values as follows:surveys_df[surveys_df['species_id'].isin([listGoesHere])]Use the

isinfunction to find all plots that contain particular species in the “surveys” DataFrame. How many records contain these values?

Experiment with other queries. Create a query that finds all rows with a weight value > or equal to 0.

The

~symbol in Python can be used to return the OPPOSITE of the selection that you specify in Python. It is equivalent to is not in. Write a query that selects all rows with sex NOT equal to ‘M’ or ‘F’ in the “surveys” data.

Using masks to identify a specific condition

A mask can be useful to locate where a particular subset of values exist or

don’t exist - for example, NaN, or “Not a Number” values. To understand masks,

we also need to understand BOOLEAN objects in Python.

Boolean values include True or False. For example,

# Set x to 5

x = 5

# What does the code below return?

x > 5

# How about this?

x == 5

When we ask Python whether x is greater than 5, it returns False.

This is Python’s way to say “No”. Indeed, the value of x is 5,

and 5 is not greater than 5.

To create a boolean mask:

- Set the True / False criteria (e.g.

values > 5 = True) - Python will then assess each value in the object to determine whether the value meets the criteria (True) or not (False).

- Python creates an output object that is the same shape as the original

object, but with a

TrueorFalsevalue for each index location.

Let’s try this out. Let’s identify all locations in the survey data that have

null (missing or NaN) data values. We can use the isnull method to do this.

The isnull method will compare each cell with a null value. If an element

has a null value, it will be assigned a value of True in the output object.

pd.isnull(surveys_df)

A snippet of the output is below:

record_id month day year plot_id species_id sex hindfoot_length weight

0 False False False False False False False False True

1 False False False False False False False False True

2 False False False False False False False False True

3 False False False False False False False False True

4 False False False False False False False False True

[35549 rows x 9 columns]

To select the rows where there are null values, we can use the mask as an index to subset our data as follows:

# To select just the rows with NaN values, we can use the 'any()' method

surveys_df[pd.isnull(surveys_df).any(axis=1)]

Note that the weight column of our DataFrame contains many null or NaN

values. We will explore ways of dealing with this in the next episode on Data Types and Formats.

We can run isnull on a particular column too. What does the code below do?

# What does this do?

empty_weights = surveys_df[pd.isnull(surveys_df['weight'])]['weight']

print(empty_weights)

Let’s take a minute to look at the statement above. We are using the Boolean

object pd.isnull(surveys_df['weight']) as an index to surveys_df. We are

asking Python to select rows that have a NaN value of weight.

Challenge - Putting it all together

Create a new DataFrame that only contains observations with sex values that are not female or male. Assign each sex value in the new DataFrame to a new value of ‘x’. Determine the number of null values in the subset.

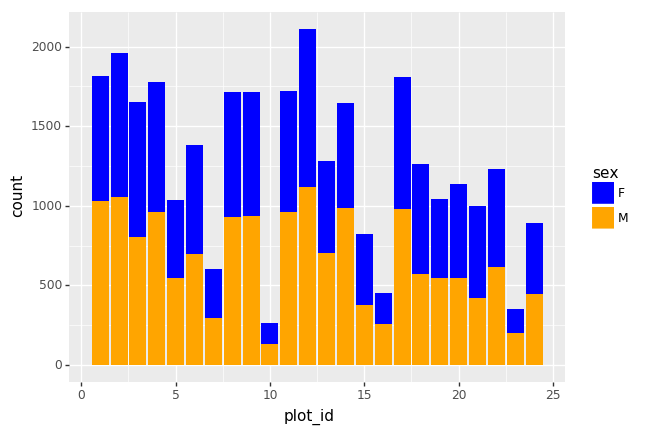

Create a new DataFrame that contains only observations that are of sex male or female and where weight values are greater than 0. Create a stacked bar plot of average weight by plot with male vs female values stacked for each plot.

Key Points

In Python, portions of data can be accessed using indices, slices, column headings, and condition-based subsetting.

Python uses 0-based indexing, in which the first element in a list, tuple or any other data structure has an index of 0.

Pandas enables common data exploration steps such as data indexing, slicing and conditional subsetting.

Data Types and Formats

Overview

Teaching: 20 min

Exercises: 25 minQuestions

What types of data can be contained in a DataFrame?

Why is the data type important?

Objectives

Describe how information is stored in a Python DataFrame.

Define the two main types of data in Python: text and numerics.

Examine the structure of a DataFrame.

Modify the format of values in a DataFrame.

Describe how data types impact operations.

Define, manipulate, and interconvert integers and floats in Python.

Analyze datasets having missing/null values (NaN values).

Write manipulated data to a file.

The format of individual columns and rows will impact analysis performed on a dataset read into Python. For example, you can’t perform mathematical calculations on a string (text formatted data). This might seem obvious, however sometimes numeric values are read into Python as strings. In this situation, when you then try to perform calculations on the string-formatted numeric data, you get an error.

In this lesson we will review ways to explore and better understand the structure and format of our data.

Types of Data

How information is stored in a DataFrame or a Python object affects what we can do with it and the outputs of calculations as well. There are two main types of data that we will explore in this lesson: numeric and text data types.

Numeric Data Types

Numeric data types include integers and floats. A floating point (known as a float) number has decimal points even if that decimal point value is 0. For example: 1.13, 2.0, 1234.345. If we have a column that contains both integers and floating point numbers, Pandas will assign the entire column to the float data type so the decimal points are not lost.

An integer will never have a decimal point. Thus if we wanted to store 1.13 as

an integer it would be stored as 1. Similarly, 1234.345 would be stored as 1234. You

will often see the data type Int64 in Python which stands for 64 bit integer. The 64

refers to the memory allocated to store data in each cell which effectively

relates to how many digits it can store in each “cell”. Allocating space ahead of time

allows computers to optimize storage and processing efficiency.

Text Data Type

Text data type is known as Strings in Python, or Objects in Pandas. Strings can contain numbers and / or characters. For example, a string might be a word, a sentence, or several sentences. A Pandas object might also be a plot name like ‘plot1’. A string can also contain or consist of numbers. For instance, ‘1234’ could be stored as a string, as could ‘10.23’. However strings that contain numbers can not be used for mathematical operations!

Pandas and base Python use slightly different names for data types. More on this is in the table below:

| Pandas Type | Native Python Type | Description |

|---|---|---|

| object | string | The most general dtype. Will be assigned to your column if column has mixed types (numbers and strings). |

| int64 | int | Numeric characters. 64 refers to the memory allocated to hold this character. |

| float64 | float | Numeric characters with decimals. If a column contains numbers and NaNs (see below), pandas will default to float64, in case your missing value has a decimal. |

| datetime64, timedelta[ns] | N/A (but see the datetime module in Python’s standard library) | Values meant to hold time data. Look into these for time series experiments. |

Checking the format of our data

Now that we’re armed with a basic understanding of numeric and text data

types, let’s explore the format of our survey data. We’ll be working with the

same surveys.csv dataset that we’ve used in previous lessons.

# Make sure pandas is loaded

import pandas as pd

# Note that pd.read_csv is used because we imported pandas as pd

surveys_df = pd.read_csv("data/surveys.csv")

Remember that we can check the type of an object like this:

type(surveys_df)

pandas.core.frame.DataFrame

Next, let’s look at the structure of our surveys data. In pandas, we can check

the type of one column in a DataFrame using the syntax

dataFrameName[column_name].dtype:

surveys_df['sex'].dtype

dtype('O')

A type ‘O’ just stands for “object” which in Pandas’ world is a string (text).

surveys_df['record_id'].dtype

dtype('int64')

The type int64 tells us that Python is storing each value within this column

as a 64 bit integer. We can use the dat.dtypes command to view the data type

for each column in a DataFrame (all at once).

surveys_df.dtypes

which returns:

record_id int64

month int64

day int64

year int64

plot_id int64

species_id object

sex object

hindfoot_length float64

weight float64

dtype: object

Note that most of the columns in our Survey data are of type int64. This means

that they are 64 bit integers. But the weight column is a floating point value

which means it contains decimals. The species_id and sex columns are objects which

means they contain strings.

Working With Integers and Floats

So we’ve learned that computers store numbers in one of two ways: as integers or as floating-point numbers (or floats). Integers are the numbers we usually count with. Floats have fractional parts (decimal places). Let’s next consider how the data type can impact mathematical operations on our data. Addition, subtraction, division and multiplication work on floats and integers as we’d expect.

print(5+5)

10

print(24-4)

20

If we divide one integer by another, we get a float. The result on Python 3 is different than in Python 2, where the result is an integer (integer division).

print(5/9)

0.5555555555555556

print(10/3)

3.3333333333333335

We can also convert a floating point number to an integer or an integer to floating point number. Notice that Python by default rounds down when it converts from floating point to integer.

# Convert a to an integer

a = 7.83

int(a)

7

# Convert b to a float

b = 7

float(b)

7.0

Working With Our Survey Data

Getting back to our data, we can modify the format of values within our data, if

we want. For instance, we could convert the record_id field to floating point

values.

# Convert the record_id field from an integer to a float

surveys_df['record_id'] = surveys_df['record_id'].astype('float64')

surveys_df['record_id'].dtype

dtype('float64')

Changing Types

Try converting the column

plot_idto floats usingsurveys_df.plot_id.astype("float")Next try converting

weightto an integer. What goes wrong here? What is Pandas telling you? We will talk about some solutions to this later.

Missing Data Values - NaN

What happened in the last challenge activity? Notice that this throws a value error:

ValueError: Cannot convert NA to integer. If we look at the weight column in the surveys

data we notice that there are NaN (Not a Number) values. NaN values are undefined

values that cannot be represented mathematically. Pandas, for example, will read

an empty cell in a CSV or Excel sheet as a NaN. NaNs have some desirable properties: if we

were to average the weight column without replacing our NaNs, Python would know to skip

over those cells.

surveys_df['weight'].mean()

42.672428212991356

Dealing with missing data values is always a challenge. It’s sometimes hard to know why values are missing - was it because of a data entry error? Or data that someone was unable to collect? Should the value be 0? We need to know how missing values are represented in the dataset in order to make good decisions. If we’re lucky, we have some metadata that will tell us more about how null values were handled.

For instance, in some disciplines, like Remote Sensing, missing data values are often defined as -9999. Having a bunch of -9999 values in your data could really alter numeric calculations. Often in spreadsheets, cells are left empty where no data are available. Pandas will, by default, replace those missing values with NaN. However it is good practice to get in the habit of intentionally marking cells that have no data, with a no data value! That way there are no questions in the future when you (or someone else) explores your data.

Where Are the NaN’s?

Let’s explore the NaN values in our data a bit further. Using the tools we learned in lesson 02, we can figure out how many rows contain NaN values for weight. We can also create a new subset from our data that only contains rows with weight values > 0 (i.e., select meaningful weight values):

len(surveys_df[pd.isnull(surveys_df.weight)])

# How many rows have weight values?

len(surveys_df[surveys_df.weight > 0])

We can replace all NaN values with zeroes using the .fillna() method (after

making a copy of the data so we don’t lose our work):

df1 = surveys_df.copy()

# Fill all NaN values with 0

df1['weight'] = df1['weight'].fillna(0)

However NaN and 0 yield different analysis results. The mean value when NaN values are replaced with 0 is different from when NaN values are simply thrown out or ignored.

df1['weight'].mean()

38.751976145601844

We can fill NaN values with any value that we chose. The code below fills all NaN values with a mean for all weight values.

df1['weight'] = surveys_df['weight'].fillna(surveys_df['weight'].mean())

We could also chose to create a subset of our data, only keeping rows that do not contain NaN values.

The point is to make conscious decisions about how to manage missing data. This is where we think about how our data will be used and how these values will impact the scientific conclusions made from the data.

Python gives us all of the tools that we need to account for these issues. We just need to be cautious about how the decisions that we make impact scientific results.

Counting

Count the number of missing values per column.

Hint

The method

.count()gives you the number of non-NA observations per column. Try looking to the.isnull()method.

Writing Out Data to CSV

We’ve learned about using manipulating data to get desired outputs. But we’ve also discussed keeping data that has been manipulated separate from our raw data. Something we might be interested in doing is working with only the columns that have full data. First, let’s reload the data so we’re not mixing up all of our previous manipulations.

surveys_df = pd.read_csv("data/surveys.csv")

Next, let’s drop all the rows that contain missing values. We will use the command dropna.

By default, dropna removes rows that contain missing data for even just one column.

df_na = surveys_df.dropna()

If you now type df_na, you should observe that the resulting DataFrame has 30676 rows

and 9 columns, much smaller than the 35549 row original.

We can now use the to_csv command to export a DataFrame in CSV format. Note that the code

below will by default save the data into the current working directory. We can

save it to a different folder by adding the foldername and a slash before the filename:

df.to_csv('foldername/out.csv'). We use ‘index=False’ so that

pandas doesn’t include the index number for each line.

# Write DataFrame to CSV

df_na.to_csv('data_output/surveys_complete.csv', index=False)

We will use this data file later in the workshop. Check out your working directory to make sure the CSV wrote out properly, and that you can open it! If you want, try to bring it back into Python to make sure it imports properly.

Recap

What we’ve learned:

- How to explore the data types of columns within a DataFrame

- How to change the data type

- What NaN values are, how they might be represented, and what this means for your work

- How to replace NaN values, if desired

- How to use

to_csvto write manipulated data to a file.

Key Points

Pandas uses other names for data types than Python, for example:

objectfor textual data.A column in a DataFrame can only have one data type.

The data type in a DataFrame’s single column can be checked using

dtype.Make conscious decisions about how to manage missing data.

A DataFrame can be saved to a CSV file using the

to_csvfunction.

Combining DataFrames with Pandas

Overview

Teaching: 20 min

Exercises: 25 minQuestions

Can I work with data from multiple sources?

How can I combine data from different data sets?

Objectives

Combine data from multiple files into a single DataFrame using merge and concat.

Combine two DataFrames using a unique ID found in both DataFrames.

Employ

to_csvto export a DataFrame in CSV format.Join DataFrames using common fields (join keys).

In many “real world” situations, the data that we want to use come in multiple

files. We often need to combine these files into a single DataFrame to analyze

the data. The pandas package provides various methods for combining

DataFrames including

merge and concat.

To work through the examples below, we first need to load the species and surveys files into pandas DataFrames. In iPython:

import pandas as pd

surveys_df = pd.read_csv("data/surveys.csv",

keep_default_na=False, na_values=[""])

surveys_df

record_id month day year plot species sex hindfoot_length weight

0 1 7 16 1977 2 NA M 32 NaN

1 2 7 16 1977 3 NA M 33 NaN

2 3 7 16 1977 2 DM F 37 NaN

3 4 7 16 1977 7 DM M 36 NaN

4 5 7 16 1977 3 DM M 35 NaN

... ... ... ... ... ... ... ... ... ...

35544 35545 12 31 2002 15 AH NaN NaN NaN

35545 35546 12 31 2002 15 AH NaN NaN NaN

35546 35547 12 31 2002 10 RM F 15 14

35547 35548 12 31 2002 7 DO M 36 51

35548 35549 12 31 2002 5 NaN NaN NaN NaN

[35549 rows x 9 columns]

species_df = pd.read_csv("data/species.csv",

keep_default_na=False, na_values=[""])

species_df

species_id genus species taxa

0 AB Amphispiza bilineata Bird

1 AH Ammospermophilus harrisi Rodent

2 AS Ammodramus savannarum Bird

3 BA Baiomys taylori Rodent

4 CB Campylorhynchus brunneicapillus Bird

.. ... ... ... ...

49 UP Pipilo sp. Bird

50 UR Rodent sp. Rodent

51 US Sparrow sp. Bird

52 ZL Zonotrichia leucophrys Bird

53 ZM Zenaida macroura Bird

[54 rows x 4 columns]

Take note that the read_csv method we used can take some additional options which

we didn’t use previously. Many functions in Python have a set of options that

can be set by the user if needed. In this case, we have told pandas to assign

empty values in our CSV to NaN keep_default_na=False, na_values=[""].

More about all of the read_csv options here.

Concatenating DataFrames

We can use the concat function in pandas to append either columns or rows from

one DataFrame to another. Let’s grab two subsets of our data to see how this

works.

# Read in first 10 lines of surveys table

survey_sub = surveys_df.head(10)

# Grab the last 10 rows

survey_sub_last10 = surveys_df.tail(10)

# Reset the index values to the second dataframe appends properly

survey_sub_last10 = survey_sub_last10.reset_index(drop=True)

# drop=True option avoids adding new index column with old index values

When we concatenate DataFrames, we need to specify the axis. axis=0 tells

pandas to stack the second DataFrame UNDER the first one. It will automatically

detect whether the column names are the same and will stack accordingly.

axis=1 will stack the columns in the second DataFrame to the RIGHT of the

first DataFrame. To stack the data vertically, we need to make sure we have the

same columns and associated column format in both datasets. When we stack

horizontally, we want to make sure what we are doing makes sense (i.e. the data are

related in some way).

# Stack the DataFrames on top of each other

vertical_stack = pd.concat([survey_sub, survey_sub_last10], axis=0)

# Place the DataFrames side by side

horizontal_stack = pd.concat([survey_sub, survey_sub_last10], axis=1)

Row Index Values and Concat

Have a look at the vertical_stack dataframe? Notice anything unusual?

The row indexes for the two data frames survey_sub and survey_sub_last10

have been repeated. We can reindex the new dataframe using the reset_index() method.

Writing Out Data to CSV

We can use the to_csv command to do export a DataFrame in CSV format. Note that the code

below will by default save the data into the current working directory. We can

save it to a different folder by adding the foldername and a slash to the file

vertical_stack.to_csv('foldername/out.csv'). We use the ‘index=False’ so that

pandas doesn’t include the index number for each line.

# Write DataFrame to CSV

vertical_stack.to_csv('data_output/out.csv', index=False)

Check out your working directory to make sure the CSV wrote out properly, and that you can open it! If you want, try to bring it back into Python to make sure it imports properly.

# For kicks read our output back into Python and make sure all looks good

new_output = pd.read_csv('data_output/out.csv', keep_default_na=False, na_values=[""])

Challenge - Combine Data

In the data folder, there are two survey data files:

surveys2001.csvandsurveys2002.csv. Read the data into Python and combine the files to make one new data frame. Create a plot of average plot weight by year grouped by sex. Export your results as a CSV and make sure it reads back into Python properly.

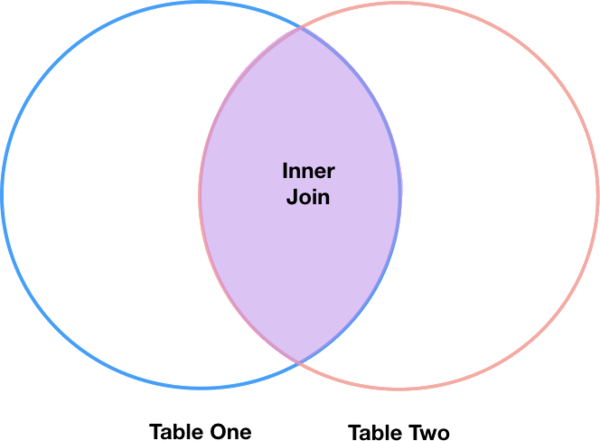

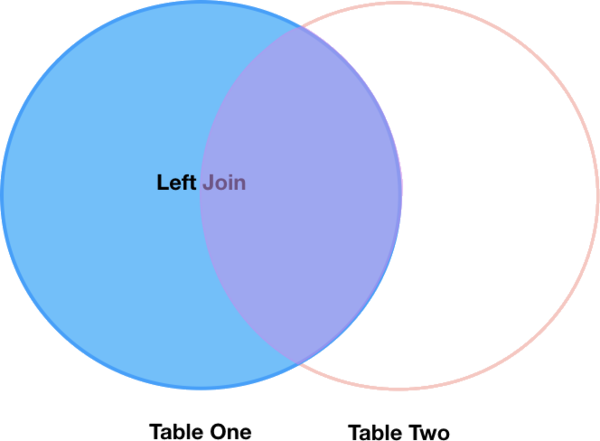

Joining DataFrames

When we concatenated our DataFrames we simply added them to each other - stacking them either vertically or side by side. Another way to combine DataFrames is to use columns in each dataset that contain common values (a common unique id). Combining DataFrames using a common field is called “joining”. The columns containing the common values are called “join key(s)”. Joining DataFrames in this way is often useful when one DataFrame is a “lookup table” containing additional data that we want to include in the other.

NOTE: This process of joining tables is similar to what we do with tables in an SQL database.

For example, the species.csv file that we’ve been working with is a lookup

table. This table contains the genus, species and taxa code for 55 species. The

species code is unique for each line. These species are identified in our survey

data as well using the unique species code. Rather than adding 3 more columns

for the genus, species and taxa to each of the 35,549 line Survey data table, we

can maintain the shorter table with the species information. When we want to

access that information, we can create a query that joins the additional columns

of information to the Survey data.

Storing data in this way has many benefits including:

- It ensures consistency in the spelling of species attributes (genus, species and taxa) given each species is only entered once. Imagine the possibilities for spelling errors when entering the genus and species thousands of times!

- It also makes it easy for us to make changes to the species information once without having to find each instance of it in the larger survey data.

- It optimizes the size of our data.

Joining Two DataFrames

To better understand joins, let’s grab the first 10 lines of our data as a

subset to work with. We’ll use the .head method to do this. We’ll also read

in a subset of the species table.

# Read in first 10 lines of surveys table

survey_sub = surveys_df.head(10)

# Import a small subset of the species data designed for this part of the lesson.

# It is stored in the data folder.

species_sub = pd.read_csv('data/speciesSubset.csv', keep_default_na=False, na_values=[""])

In this example, species_sub is the lookup table containing genus, species, and

taxa names that we want to join with the data in survey_sub to produce a new

DataFrame that contains all of the columns from both species_df and

survey_df.

Identifying join keys